As you may have heard, the robots are coming.

Intelligent and skilled, a new generation of machines is poised to forever change the way we work (or don’t, if you’re one of the 85 million people projected to lose your job to AI by 2025, according to a 2020 report from the World Economic Forum).

In 2023, the pace of change seems to have accelerated even more, with tools like DALL-E and ChatGPT going from niche curiosities for nerds to household names in a matter of months. It’s enough to leave any leader a bit disoriented – and concerned – about how they might fit into this brave new world.

Here’s the good news: organizations still need flesh-and-blood leaders, even in the age of AI. And the leaders who successfully ride this wave of innovation won’t do so by mimicking machines. Instead, leaders can thrive by tapping into their uniquely human capabilities.

Cultivating emotional intelligence is one of the most important – and effective – ways to do this. But what exactly is it? In my new book, The Threshold: Leading in the Age of AI, I draw on the framework devised by psychologist Howard Gardner to define emotional intelligence as the ability to acquire and apply knowledge and skills in two key areas:

- Intrapersonal relating (understanding yourself, what you feel and what you want); and

- Interpersonal relating (sensing others’ intentions and desires)

You can probably imagine a variety of ways in which these skills and their underlying mindsets would be useful. Still, the question remains: how exactly are they going to help ensure you have a place in your organization for years to come?

To answer that, it helps to have a high-level understanding of the trends shaping AI’s development.

The AI arms race

As 2022 drew to a close, the term “ChatGPT” was barely uttered anywhere on the internet. By February 2023, Google Trends revealed that it had become a more popular search query than PS5, the best-selling video game console in the United States. Clearly, the AI-powered writing tool has captured people’s imaginations.

It’s also sparked a heated competition to develop newer, more powerful AI tools (and other tools to counteract those tools). When students began using ChatGPT to cheat, other students developed a program to detect ChatGPT-generated text. And when Microsoft’s investment in ChatGPT started garnering headlines, Google was quick to rush out Bard, its own AI-powered chatbot. A host of other competitors soon followed suit with AI tools of their own, including Chinese tech giants Baidu and Alibaba.

It’s an exhilarating spectacle to watch and an exhausting one to be involved in. We’ve seen this endless game of tit-for-tat play out before. In the early days of internet search, Google’s algorithm was renowned for returning much better results than its competitors. Then, a robust industry of SEO marketers arose and began gaming the system. Google responded with updates to the algorithm; the SEO marketers developed new tricks. The cycle repeated again and again. Now many believe that Google Search is worse than it was before.

It’s too soon to say if Google will go the way of Kodak and Nokia, which were once-iconic brands that fizzled out in large part due to their leaderships’ myopia. But these cautionary tales do make one thing clear: to thrive in an age of disruption, it helps to play a different game than everyone else.

In this context, it means that leaders who want to safeguard their livelihoods can accomplish this not by trying to out-machine the machines, but by becoming more fully and authentically human. It’s an idea with roots in the past – Prof. Daniel Goleman’s work on emotional intelligence – has been a mainstay of leadership theory for years – and now it’s blossoming in new ways in the age of AI.

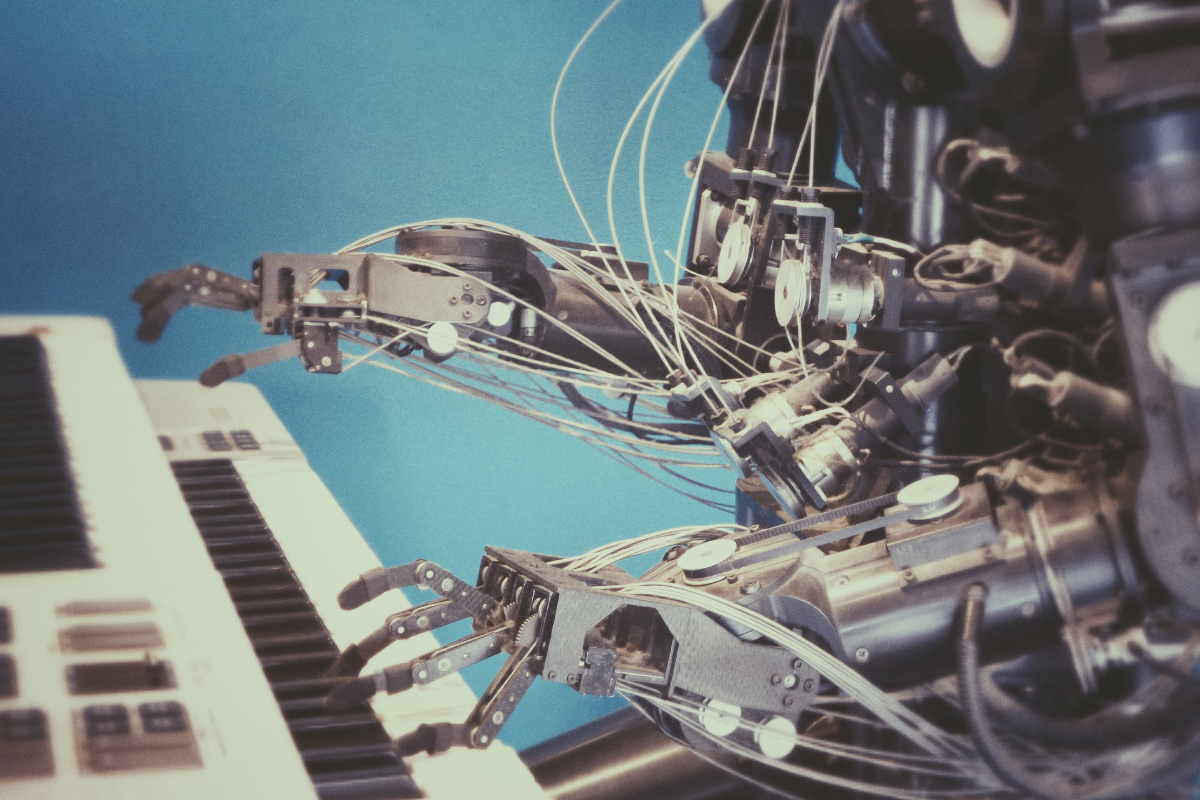

Why AI can’t replicate humans’ emotional intelligence

As we’ve seen, AI has gotten quite proficient at creating convincing simulacra of human writing. It’s also demonstrated an ability to draw like a human and talk like a human. So why can’t AI interpret emotions like a human as well?

There are several reasons.

As I describe in my book, it starts with a matter of mathematics. Tools like ChatGPT are effective at replicating human writing because they’re trained on enormous data sets, in relatively narrowly defined areas (i.e. if AI scans a thousand sentences, it can produce a sentence of its own with relative ease). However, emotions are a much trickier subject.

An AI program that has never had a glass of milk can’t imagine what it tastes like, or what childhood memories it might recall. An AI program that has never fallen in love has no point of reference for the excitement of a relationship beginning or the pain of it ending.

Such sensations are simply beyond AI’s ability to experience.

I spoke with a scientist at DeepMind, the company behind AlphaGo, an AI program that made headlines in 2017 for beating a human champion at the complex strategy game Go. They told me that both algorithmic and hardware inefficiencies make it unlikely AI will have the raw power needed to master the subtleties and idiosyncrasies of human feeling.

It turns out when it comes to interpreting emotions, AI even has trouble achieving more modest goals. Despite some tech companies’ claims, AI has proven unable to correctly interpret people’s facial expressions on a consistent basis. Whether facial expressions are an accurate proxy for emotional status is, of course, a matter of debate itself.

Couldn’t AI learn to get better at this, though, to the point where it exceeds human emotional intelligence across the board? It’s possible in theory, though unlikely in practice. Most psychologists agree that humans learn by doing and, in the process, through making mistakes. Experimenting, in other words. But many experiments (even ones that could potentially yield useful information) are beyond ethical boundaries. Allowing robots to make mistakes could prove costly or even deadly. It’s hard to argue that risking harm to huge numbers of people would be worth the reward of smarter AI.

So, if robots aren’t going to fill this niche, humans will have to do it.

Why emotional intelligence is vital in the age of AI

AI is a tool and, like any tool, its impact on society depends on the people who wield it. An organization that prioritizes human flourishing and puts it at the core of its mission is likely, though not certain, to create products and services that support this flourishing. As for an organization guided by less noble principles, well, you can see where this is going.

For example, consider NoiseGPT, a tool that turns text into celebrity voices. On its website, NoiseGPT boasts about its values of “no censorship” and “ultimate free speech.” The words take on a somewhat worrisome tone in light of the fact that similar tools have almost instantly been abused to put racist, homophobic and violent words into the mouths of celebrities. Compare this with ChatGPT, which – despite its assorted shortcomings – recently went viral for refusing to use a racial slur, much to the chagrin of certain users.

Emotional intelligence is key to the development, implementation and use of AI tools.

Without it, critics’ worst fears could be realized overnight: The internet could become an even more chaotic, hateful and deceitful place.

However, we have a clear and appealing alternative to this dystopian vision. And we have the tools to make it a reality.

How to harness the power of emotional intelligence in your organization

Ideas are nice to think about, but they gain their real power when put into practice. Fortunately, as a human, you already have emotional intelligence – and any workplace provides plenty of opportunities for strengthening and deploying it.

Making time for stillness is a good place to start. This involves attending a dedicated offsite event; one of my personal favorites is known as “labyrinth,” a kind of walking meditation through a maze-like environment. Or you could simply set aside 10–15 minutes of scheduled meeting time for deep reflection. A still mind is enormously helpful in understanding both your emotions and those of others. You’ll be pleasantly surprised by the quality of insights that arise when your mind isn’t constantly reacting to a bombardment of outside stimuli.

A still mind also makes you a much better listener.

Most of us tend to pay partial attention to others during meetings or conversations, because our minds are racing to anticipate their next point or to plan our own response. You can miss a lot when you’re not paying attention to what’s going on around you. In my book, I recount an experience of literally walking face-first into a tree because I was preoccupied with other thoughts.

When you’re fully present, on the other hand, people notice it. They feel heard and respected, and motivated to do their best. Satisfaction and productivity skyrocket when leaders show they care for others by calming their minds and paying magnificent attention to colleagues. In other words, when leaders bring their full emotional intelligence to their work.

And that’s something only humans can do.

Dr Nick Chatrath is an expert in leadership and organizational transformation whose purpose is helping humans flourish. He holds a doctorate from Oxford University and serves as managing director for a global leadership training firm. His newly published book is “The Threshold: Leading in the Age of AI.”